VCF 9.0 introduced a new way to setup and configuring networking. The two models that can be configured depending on your business’s needs. There is Centralized Connectivity & Distributed Connectivity. Not going into full detail with the difference of those two, please read about it here, Setting up Network Connectivity.

Some of the plumbing of the network, it’s all Ubiquiti Unifi system, ESX hosts have dual 10GB interfaces each. My choice of routing is BGP, read more about my experience here; BGP Peering NSX Tier-0 with Ubiquiti UDM Pro. This will be key as I want my virtual networking to traverse the physical (North/South)

My blog on BGP Peering is pending an update. I will work on an update, however should get the peering to work.

Deployment

From the vSphere client, highlight the vCenter instance, in the right pane navigate to Networks >> Network Connectivity.

You will be presented with a Gateway Type, ours will be Centralized Connectivity

Networking Prerequisites

This is where we configure our Edges, select our size and give a unique name for our Edge cluster object.

Clicking ‘Add’ will bring up configuration for the first Edge node. In this section it will be mainly management configuration for the Edge as well as Edge TEPs.

The bottom was cut off, here is the remainder

Once we save, we have an option of Adding or Cloning the configuration.

Fill out the unique settings for the clone

There is an option to have passwords auto generate or you can specify ‘admin’ & ‘root’ passwords.

Example of filling it out

The final step before Deploying will be the ‘Workload Domain Connectivity’, this will contain BGP related configuration and Edge gateway uplink configuration.

The next series of screenshots are configuring 2 Gateway uplinks for each edge appliance.

I have 2 physical networks created (vlan 3 & vlan4) as interfaces specifically for overlay and North-South communication.

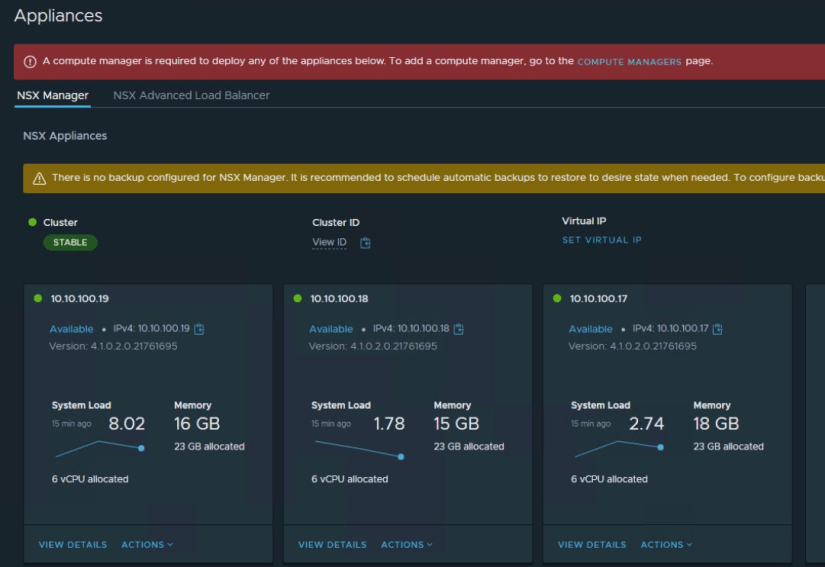

The final step is Deploy. Take a look at some of the photos post-install.

The final step is to perform an Inventory Sync in VCF Operations. This let’s Operations manage monitoring and password Lifecycle.

Under Fleet Management >> Passwords, Edge appliances are not there.

From VCF Operations >> Inventory, select the Management Domain and perform a Sync Inventory

After some time, the additional accounts will appear under Fleet Management/Passwords